AGRICULTURE

Autonomous vehicles require an enormous amount and variety of technology to work. More than that, they require safe technology with extremely low failure rates.

Of all the required technology, the first point of entry is the sensor package installed on a vehicle. This package determines what a vehicle can sense about itself and its surroundings. Without this knowledge, an autonomous car simply cannot function. Add onto this the need to function safely, and it becomes clear that an array of complimentary and redundant sensors is required to guarantee the required level of reliability.

The purpose of vehicle sensors can be divided into two categories: positioning (localisation) and perception.

Whether for positioning, localisation or perception, the package of sensors must be combined to provide optimal results, in a process called sensor fusion.

“It is absolutely necessary to employ some version of sensor fusion to achieve safe autonomy. How this sensor fusion is done is the question. At Hexagon, our approach is that the IMU is the core of the system—always available, but in need of support. All the possible aiding sensors can provide information to help and overlap with data even when one is unavailable.”

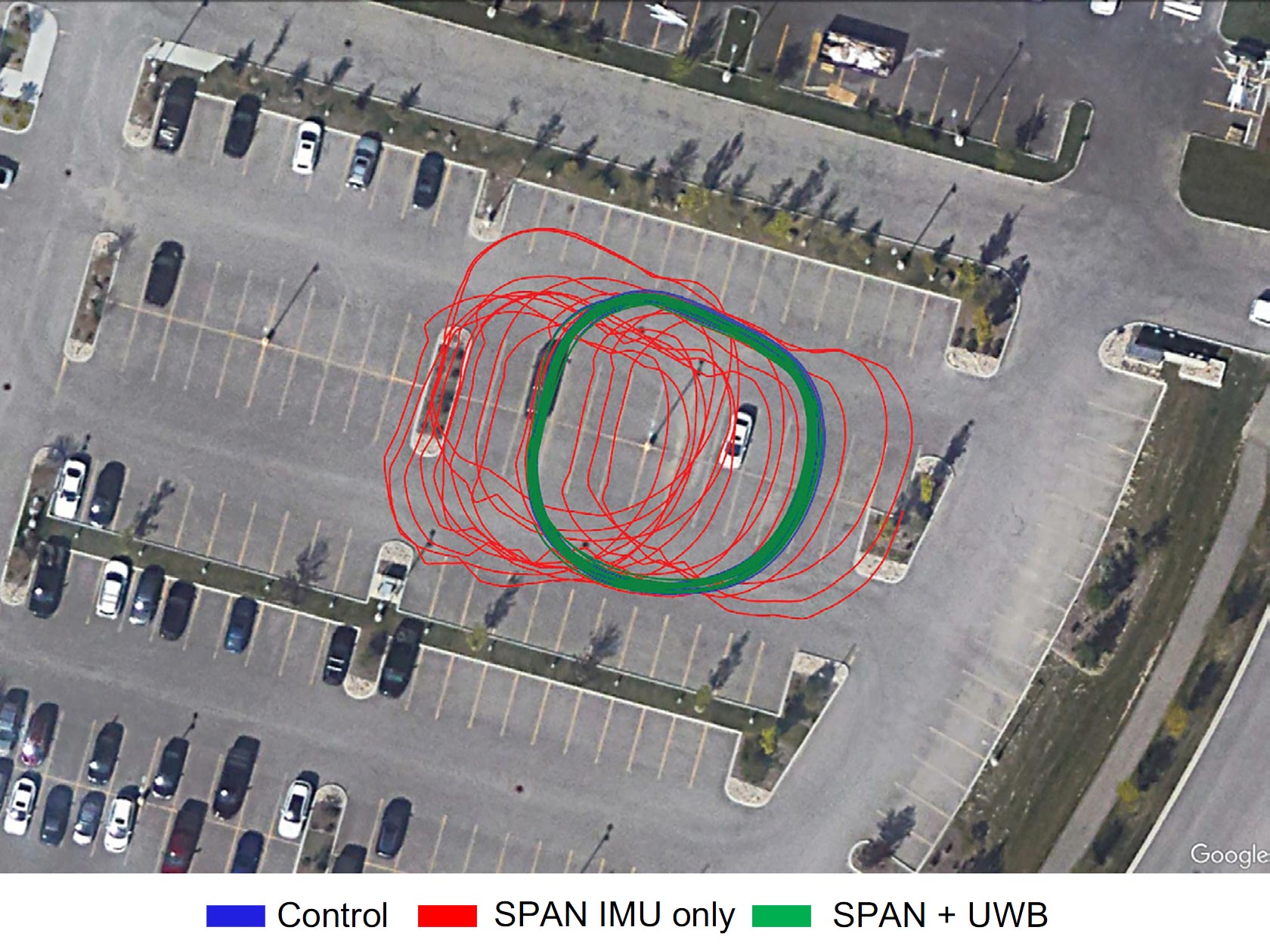

This article explains what sensor fusion is and why it’s needed for positioning, and describes the types of sensors used to achieve the goal of safe autonomy, including ADAS, cameras, LiDAR, Radar, V2X and high-definition maps. Real-world testing results show how each type of sensor aids positioning in GNSS outage scenarios compared to SPAN GNSS+INS technology.

10-minute GNSS outage error with ultrawideband (UWB) fusion

Read the full article by downloading Velocity 2023

Download the latest edition of Velocity magazine.